What is “artificial intelligence”? Is it a fancy technology? A 24-hour surveillance system? A management consulting buzzword? A PR effort to inflate corporate share prices? A political project designed to shape the world more to the liking of the billionaire class? A way to replace needy human workers with machines?

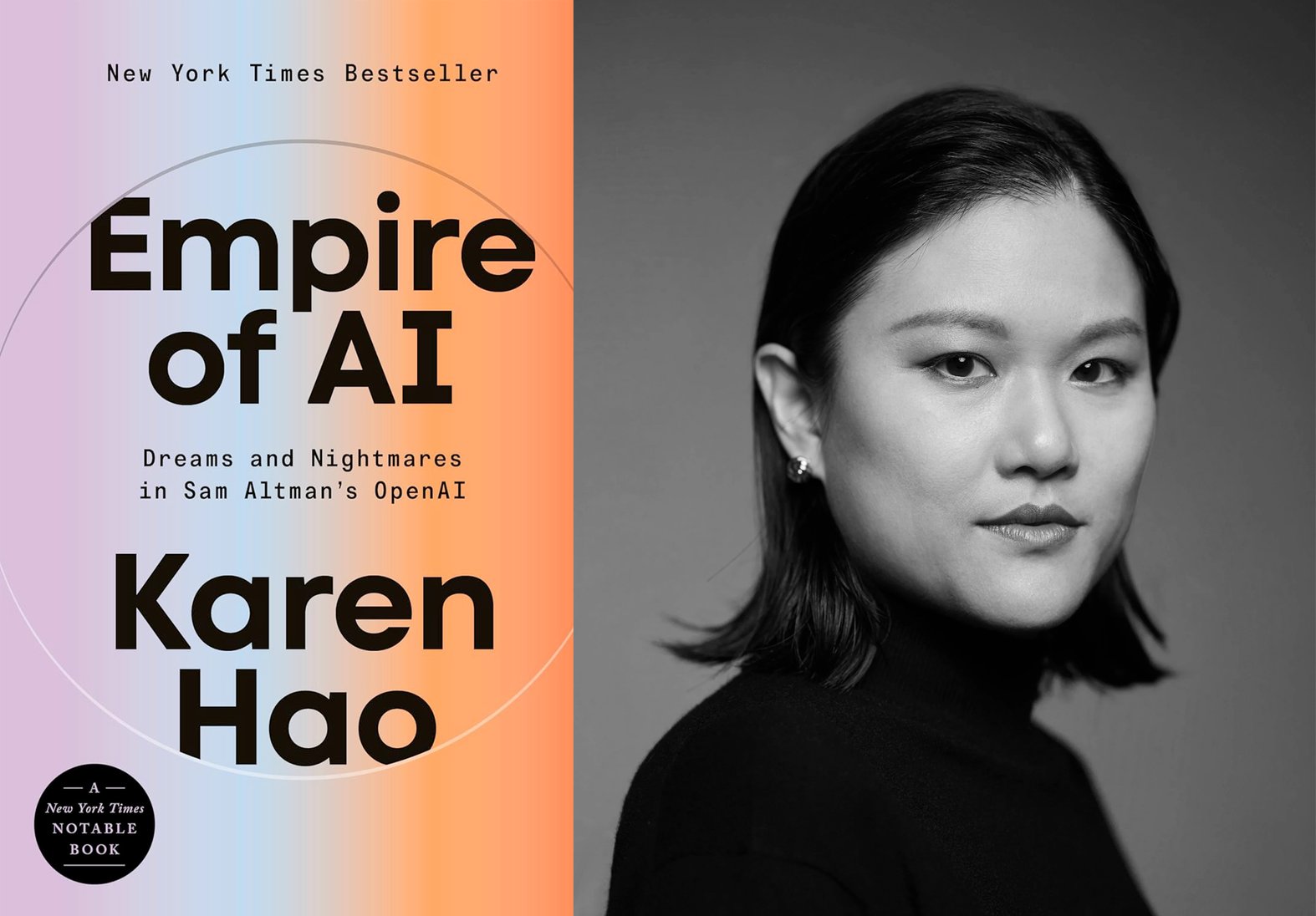

Perhaps it’s all of that—and more. In her groundbreaking book Empire of AI: Dreams and Nightmares in Sam Altman’s OpenAI, award-winning journalist Karen Hao argues that AI—and the profit-driven infrastructure that surrounds it—is a colonial project. What OpenAI boss Altman and his fellow ideologues in Silicon Valley are pursuing, Hao says, is not just corporate power but imperial power. They are building empires. And as history shows, empires are built on resource extraction, particularly the old-fashioned kind: of labor, energy, minerals, land, water.

Seemingly overnight, tech elites’ feel-good climate promises have evaporated, having been seamlessly swapped for slippery promises that so-called “artificial general intelligence” will save the planet for us. Never mind that the much-hyped artificial general intelligence, or AGI, is a fantastical concept that has no agreed-upon definition, or that, more fundamentally, it appears to be nowhere close to existing. In Big Tech’s frenzied pursuit of the “hyperscale” AI dominance that evangelists claim will unlock AGI, as well as its expanding alliances with fossil fuel-backed petrostates and authoritarian political movements, the industry has become an increasingly central contributor to the climate crisis.

In an October conversation with Drilled, Hao discussed how Silicon Valley giants appear to be following the oil and gas industry’s playbook of disinformation and deceit; how Altman and OpenAI’s secrecy and disingenuous rhetoric transformed the field of AI research into corporate PR; and why the destructive trajectory of AI scale and commercialization is not inevitable—no matter what its power-hungry proponents would have you believe.

The written version of the conversation below has been condensed and edited significantly for clarity and accuracy.

Prefer to listen? You can find the extended conversation with Hao on our podcast here:

One of the things I found most troubling [about Empire of AI is] how artificial intelligence, or the concept of it, is an ideology in Silicon Valley.

If we look at the history of AI and the founding of the scientific discipline in 1956, even then it was already an ideological project. It was a group of scientists deciding that they wanted to try and replicate human intelligence in computers. That’s a very political choice. Why do that? It’s not really clear what the scientific reason is. There was an intention behind choosing that path and setting up a field that defined its goal as attempting to mimic, and therefore potentially replace, humans.

Fast forward to today. That ideology has not only continued to permeate and drive the industry and its goals, it has now become much more extreme because, layered on top of this fundamental assumption that it is somehow inherently good to recreate human intelligence in computers, we now have a particular approach to doing that that these companies have indexed on—scaling these models at all costs—which is also an ideology. There is no scientific basis for choosing that approach. There’s no scientific reason why these companies are trying to dominate as monopolies. This is also based on these worldviews and values that a certain small group of people have chosen. And that group of people have an extraordinary amount of power to make that choice affect everyone.

People keep thinking that this is just a business story—it’s Silicon Valley doing commercial things and trying to drive profit maximization. That is only half the story when it comes to AI. There is a deep ideological drive to recreate human intelligence, and a belief in the idea that this is somehow going to solve all of our problems or potentially destroy us, and that in order to do all these things we have to consume the entire planet’s resources. All of those [ideas] need to be scrutinized for what they are, which is an extremely narrow view about how the world is and how it should be.

The idea of artificial general intelligence, or AGI, is something people hear about a lot these days. It’s great for headlines and great for CEOs of these companies. But they also use the idea of AGI as a pretext. Can you talk about how they use this hypothetical concept to basically justify whatever it is they want to do?

The loosest definition of AGI is the point at which an AI system fully encapsulates all the dimensions of human intelligence, which was the original definition of AI. The problem—why AGI is so ill-defined—is because we don’t have scientific consensus around what human intelligence is. There’s no neurological, biological, psychological definition for this special characteristic that humans seem to have more of than other animal species. In fact, when you think about the history of trying to measure, quantify, rank human intelligence, it has always been driven by extremely dark motives—eugenics, the justification of oppressing different groups of people. This is why AGI remains a nebulous milestone: because different people have totally different ideas of, what does it mean that we’ve finally achieved human-level intelligence?

It becomes a tool that executives use to their advantage, and Altman in particular. They can paint these visions of AGI based on whatever is necessary to overcome a particular hurdle that they're facing in that moment. When Altman is speaking in front of Congress, he’ll paint AGI as a magical wand that you can wave that’s going to solve climate change, cure cancer, do all these things that people have wanted for a very long time. When you’re talking to CEOs, AGI is suddenly an employee—something that they can buy as a service so that they don’t have to hire real humans. And then when you’re talking to an average consumer, AGI [is] suddenly a friend or an assistant or whatever it is that compels that person to put down money, or compels the regulators to not put in regulation.

AGI shape-shifts and morphs in these ways. It’s just a rhetorical tool for getting what they want.

This is probably obvious to a lot of people, but I think still bears emphasizing: Corporate CEOs salivate at the idea of replacing workers with machines. You can see how the concept of AGI would be so appealing to a CEO.

Absolutely. This is why this [the promise of AGI] has continued to be a self-perpetuating myth. There is such a high demand for that magical thing that it represents. All of the market incentives and political incentives push Silicon Valley to continue propping that myth up and using it to dominate the narrative.

Something you trace throughout the book is the way that the promise of AGI solving the climate crisis has been used to rationalize unfathomable environmental costs. You describe it as “a huge gamble”: They’re basically saying, Resource consumption, land grabbing, human exploitation [do not matter] because this magical mystery technology will solve this crisis—that we’re contributing to in a massive way—at some point in the future.

To quantify what level of environmental impact we’re talking about, this [2025] McKinsey report did an estimation on how much energy would be required to sustain the current projections of AI industry growth. We would need to add two to six times the amount of energy consumed by California onto the global grid in five years, just to sustain the AI industry and its data center demands. That is insane. That possibly six times the fifth largest economy in the world. Other projections have said more energy than all of India, the largest energy consumer at this point globally. And most of that will come from fossil fuels.

We are not only talking about an acceleration of climate change, we are also talking about an acceleration of air pollution. We are already seeing reports of methane gas turbines, for example, being used to power Colossus, the supercomputer that Elon Musk built for xAI and training Grok in Memphis, Tennessee, that’s pumping thousands of tons of pollutants into working-class communities that already had a long history of being denied the fundamental right to clean air for decades. We also see the exacerbation of a huge crisis of people getting access to clean drinking water around the world, in part due to the acceleration of climate change. AI exacerbates that. This investigation from Bloomberg found that two-thirds of the data centers being built for the AI industry are going into places that are already scarce on freshwater resources. Data centers require freshwater to make sure that these extremely expensive computer chips don’t overheat and bust.

You have this multi-layered environmental public health crisis being pushed to the brink and undermining people’s fundamental ability to live a decent quality life. That’s happening now. This is not speculative. This is literally playing out currently. And the AI industry justifies all of this based on a speculative assumption that maybe we will get to a point where these AI systems can then fix all of those problems. That is the thing that is so mind-blowing to me: In this AI era, somehow, future speculation can be used to justify present-day, real-world, substantial harm.

The question that I always ask people is, how long do we wait for this potential speculative future? How much harm do we suffer before we realize that future might not arrive, and we might not survive these harms? Of course, the people who are ultimately making the decisions, they’re not the ones that bear the brunt of these harms, of [the] climate having changed already. They’re not the ones breathing in this toxic air from fossil fuels burning in their communities. So for them, the question of how long can we bear to do all these things before we potentially reach euphoria is really long compared to most of the rest of the world.

[One of the] ways in which the tech industry seems to be following the fossil-fuel industry playbook [is] this narrative of, a) this is inevitable, it’s happening whether we like it or not, and so we might as well be the ones doing it; and, b) this is the price of progress, and if you’re against this, then you’re against human progress. These powerful narratives of inevitability and progress [are] the exact same rhetoric that the fossil-fuel industry has been deploying in some form or another for decades.

It is the trump card for any industry that knows that they’re engaging in something that’s deeply incorrect. These technologies are not inevitable. The thing that I spent a lot of time trying to do with my book is document the moments in which OpenAI employees made decisions that fundamentally shaped the trajectory of how the technology was introduced into society based purely on whims of judgment. Technology is always a product of human choices, and sometimes those choices seem really minor in the moment. How can it be “inevitable” when the shape of a technology can be swayed based on a person sitting in a room deciding on the fly to do one thing or another?

And with [the] progress [narrative]—the tech industry perpetuates [the idea] that there’s only one form of AI, and so if we want progress from AI, we just have to accept the costs, meaning the colossal environmental impact, public health impacts, and so on. AI is a collection of many different technologies that work in very different ways, that have very different cost-benefit trade offs. The large-scale AI models that Silicon Valley has imposed on everyone, that I describe as an imperial consolidation project—that is the form of AI that is the most costly and has very unclear benefits that do not justify those costs.

But when talking about the kinds of progress that we would actually want—things like wanting climate change to be resolved, better healthcare for treating different types of diseases—these are types of progress that we already know how to build AI systems for, that look fundamentally different from these large-scale systems. Those types of AI systems are task specific. They’re often very, very small and cost extremely small amounts of energy to develop. They’re trained on highly curated data sets that do not require the type of labor exploitation and content moderation that goes into something like ChatGPT. We know that when we build these systems, we get benefits on the end, rather than this speculative, “maybe in the future we’ll reach AGI one day and it’ll solve everything.”

You describe as a “doctrine” in Silicon Valley that the only way to do this is to scale as big and as fast as possible. From an environmental perspective, the biggest costs come from the scale, right? It’s not necessarily the technology itself, but the size at which they are obsessed with doing this?

Exactly. My fundamental critique about Silicon Valley’s approach to AI is that they’ve created this idea—that you can no longer challenge—that in order to reach some kind of utopic state through the creation of AGI, you have to pour ever more data into these models and train them on ever larger supercomputers. Meta and OpenAI have both drafted plans to build supercomputers the size of, and that consume the same power as, Manhattan.

This is not scientifically sound. Before ChatGPT came out and before large language models became the obsession, AI research was heading in the exact opposite direction. People were looking to build smaller and smaller AI models, and it was all about tiny AI. There were all of these different techniques that researchers were experimenting with that found that you can build really powerful AI systems with minimal amounts of data. That understanding—that we can create new algorithms, create new training techniques, redesign AI systems from the ground up to use less and less resources—[has] been totally thrown out the window by Silicon Valley. It’s not scientifically or technically justified, and it has gotten us into this absolutely twisted state where we are burning down the earth in order to do something that’s wholly unnecessary.

We often talk, for good reason, about problems that are systemic and structural rather than individual. But this is one of those cases where it’s also very individual, in the sense that so few people are making these decisions. How much do you think this trajectory that we’re on is driven by individual desire for wealth and power and domination, versus actually believing in their hearts that these technologies will solve the problems that they claim to be able to solve? I’m wondering how you think about the psychology, and how much of our future is being dictated by a small number of people’s desire for domination over the rest of us.

I think in order to be one of these people you have to be self-delusional, and so I’m sure that they believe that they believe that they’re doing this out of goodness. I don’t think you can sustain waking up every morning and orchestrating these deals and decisions that are terraforming the earth and reshaping geopolitics and rewiring everything without deluding yourself into believing that you are on a mission for the benefit of all humanity.

That was not something I fully understood until I reported my book and spoke with so many people in this world. I did think that there was more distance between the rhetoric that they used publicly and their true beliefs. What I realized is that the rhetoric and what they believe—the distance collapses over time because you cannot continue to say all of these things and do all of these things without telling yourself that that is true. People’s beliefs become molded by what they need to accumulate more power and wealth.

I have seen people transition in their beliefs over time as they’ve become more and more part of the company and this world. It does completely transform them and who they think they are and what their worldviews are. I was talking to people back then who were like, I don’t really believe in the AGI thing but, you know, I get a good salary, I get a lot of resources to play around with cutting-edge research—who have now become complete AGI believers [who] do not even question for a second that they are building something that could be akin to a god and will have utopic qualities and this is the reason that they were brought to this earth.

I’m sure it’s intoxicating. I can see how people might get addicted to that feeling of being on some sort of divine mission.

Absolutely. And not just a divine mission. People also get addicted to the sheer size of the resources they can have access to. They get addicted to the amount of influence that they can have. I’ve had people say to me, Well, there’s very few other companies that you can have ripple effects on billions of people around the world. They’re not saying that in a Machiavellian way. They’re saying, I want to change the world to be a better place, so what better leverage than being at a company that touches billions of people’s lives?

It’s really unsettling.

The realization that the self-delusion is that strong made me feel a lot more freaked out about where we could be heading if we don’t, as a society, rise up to contain the empire of AI.

You point out throughout the book the way that OpenAI sets the precedent for the rest of the industry, [such as] the obsession with scale. Another [example] is the rapid devolution from these [companies] being essentially research hubs to—in another parallel to the fossil fuel industry—very secretive. They have information, but they’re not publishing it. They’re not sharing it. Critical research gets suffocated. People get fired for criticizing the regime. Can you talk about the suppression of inconvenient information and how fast that shift occurred?

People who started becoming aware of the AI industry and its practices post-ChatGPT would think it’s perfectly normal that these companies are very secretive about their “intellectual property.” It’s not weird to them that these companies are tight-fisted about that kind of information and not transparent. For people who knew what the norms were in AI research before ChatGPT, this is a dramatic, 180 [degree] shift. Before, even though much of the tech industry was bankrolling AI research, the understanding was that in order to be competitive in attracting talent, you had to entice AI researchers by promising them that they would ultimately get to publish all of their work in public. AI research was still very academically driven. Even if you were a Google researcher or a Meta researcher or whatever, your street cred was still based on, how many papers are you publishing in top publications? How many citations are they getting?

Not did they [OpenAI] close themselves off, in a great irony to their name, they closed the entire industry off by doing a lot of work early on to seed this idea that maybe opening up AI research could be dangerous, and therefore it was actually the responsible thing to shutter this research so that “bad actors” could not get access to it. That created a cascading effect across the industry. Suddenly it became clear to companies that they could still retain the top researchers and be competitive in the talent race while being closed off.

Very quickly, because most AI researchers were already employed by Big Tech, the majority of the research discipline now becomes distorted based on what companies think is appropriate or not appropriate for the public to know. It’s not actually a science anymore. It’s just PR. And we have a completely inaccurate understanding now of what the true capabilities and limitations of these AI systems really are because you cannot audit what these companies claim the systems can do without any of the transparency on how they were built, what data were they trained on, what tests are being run on them, what contexts have they been stress tested in, and so on.

And that’s true for the environmental impacts and carbon emissions too?

We don’t know how much energy it takes to train a model. There are open-source models and there has been some fantastic research on using open-source models as a proxy for understanding the energy and environmental impacts of training these large-scale AI systems. But we only know what the real impacts of the commercial closed-door systems are when companies deem it okay to release that information, which has been very rare but has happened when they’ve come under extreme public pressure to do so. Google has, over time, slowly dribbled out some information about the environmental impacts of its models. Meta has tried to do a little bit of that to generate some good PR around their transparency and trying to position themselves as an open-source leader. But it is so limited, and the transparency is completely disproportional to what is needed based on the size of the environmental impact and energy consumption.

Something that you noted in the book [is] that to solve a challenge like the climate crisis, “We’ll also need more social cohesion and global cooperation,” and that is precisely what these empires are undermining. Could you unpack that a bit?

A revelation that I’ve had again and again in reporting on technology is that so often as a society we are vulnerable to believing that technology will solve social problems. Only social solutions will solve social problems. Climate change, at this point, is not a technology problem. We have an abundance of solutions that we could be deploying. It’s a lack of political will. It’s a lack of global cooperation. [It’s] a lack of these soft skills that have caused us to fail.

There’s this organization called Climate Change AI that I really love. It’s a nonprofit that was set up by a bunch of AI researchers around the world to think deeply about what can AI actually do to try and mitigate climate change. They document this long list of different computational challenges—because AI is a computational technology that lends itself to computational challenges—[where AI] could be helpful: integrating more renewable energy into the grid, more accurate weather prediction, more accurate climate disaster prediction, reducing the energy demands of buildings and cities. The AI techniques that they list have absolutely nothing to do with large-scale, generative AI systems. They’re all techniques that have been around for basically a decade.

[Big Tech] companies are creating AI systems that are straining resources, which heats up geopolitical competition over those resources. They are perpetuating an arms-race narrative that also erodes the willingness of different geopolitical powers to cooperate with one another. They are creating machines of misinformation and disinformation that’s eroding the fabric of trust in various societies, that is also the building block for cohesion and cooperation. All of the things that we need to fortify are being hacked away by the industry.

Despite the power that these empires have, they’re not invulnerable. Could you share one of the stories of the communities that have successfully fought back?

One reason I think the empire analogy is extremely pertinent is because empires are made to feel inevitable, and yet they’ve always fallen in history. It’s because their foundations are extremely weak. You cannot sustain this degree of extractivism and exploitation at such a large scale without people revolting.

That is what I document happening in Chile. I spoke with activists in this community on the outskirts of Santiago called Cerrillos. They learned that Google was trying to build a data center in the one town that has access to a public drinking water source. In Chile, due to its history of dictatorship, most things were privatized, including water. But the one municipality that had free public drinking water was the municipality that Google was then like, Yep, we’re going to put our data center there and tap into this fresh water resource to cool our facilities.

This community lit up. Absolutely not. Activists started knocking on doors, posting flyers, creating memes and political art and all this stuff to educate their neighbors that this project is not going to benefit our community and is in fact taking the one extremely precious resource that we have. They made so much noise that it escalated all the way to both Google’s headquarters in Mountain View and to the national Chilean government. After extraordinary pressure where they’ve stalled this project for five years, the Chilean government has now created a roundtable for consulting residents and environmental activists on their data center plans and putting them in conversation with companies like Google and Microsoft.

It’s not a perfect solution. Activists have said the moment that they blink, everything can fall apart. They have to continue being vigilant, protesting, resisting, being on the streets. But it is a remarkable step that they got the government to even bring them to the table and a lesson to be learned by everyone around the world: If you remember that you still have agency, you can absolutely shape the trajectory of AI development by loudly, forcefully making that kind of trouble when companies are engaging in this imperial activity.